Users have been gaming YouTube by purchasing fake YouTube views practically since the platform began. Some claim they’re merely trying to jumpstart their own imminent viral success, others are less nuanced about their intentions, but the mere existence of fake views draws the authenticity of every YouTube video into question, especially given recent worries over the popularity of the alt-right and conspiracy peddlers on the platform. Is Alex Jones’ propagandist nonsense actually trending, or were the numbers bumped? What about that crisis actor video? Or flat earth? Or Jordan Peterson? The problem is that the economy for fake views has only thrived, and YouTube has only made it more difficult to see their effects.

Views are the currency of YouTube. More views often translates into a higher ranking in user search results, or better circulation numbers in the sidebar, both of which affect the amount of money YouTubers make via ad impressions. What’s more, they’re the measure by which the outside world assigns worth, a credential that makes them more visible to vulnerable populations with malleable views. A high view count carries with it an air of legitimacy — approval from both YouTube and its users alike. It’s what users are supposed to strive for.

YouTube’s fake view problem is practically as old as the site itself. Viewbots were wreaking havoc on YouTube as early as 2009, and by 2011 the problem had gotten bad enough to attract media attention. Back then, fake views were easy to buy, but they were also easy to spot: YouTube still gave the public access to individual video statistics, which would indicate whenever, say, 5,000,000 random views happened to appear from one source.

YouTube’s latest algorithm appears to weigh views paired with interactions — in the form of a like or comment — much more heavily. Buying likes doesn’t remedy this, as — even if both services are bought from the same panel — they won’t come from the same IP address as the views. As fool-proof of a system as this may seem, viewbotters appear to have already found a workaround. They’re called “non-drop views” or “real views” (though they’re not actually, you know, real), and panel owners claim they’re able to game the algorithm. It’s possible to compare engagement (i.e. likes and comments) to view totals, but that’s hardly a science. Since viewbotters have managed to stay ahead of the curve, and YouTube no longer gives access to video statistics, we are even more in the dark than ever about what is actually popular, and what is being forced into the cultural conversation through manipulation.

“Hey guys, I've just started CPA marketing,” writes a user on BlackHatWorld, the online marketing forum known for it’s uh, moral grey areas. CPA (or, cost per action) marketers get money when users perform some sort of action, like clicking on an affiliate link. “I know the basics, but I'm learning about sending traffic to the CPA offers. I chose to use YouTube (if anyone has better method, would appreciate to know!) for bringing the traffic and that's why I made a video. My question is: how to get first 1000-2000 views? You know, no one will watch the video if it has very low view count (like 100, 200, 500 etc.). So I thought to have at least 1000/2000 views on my video first and then spread the video.”

The first in-depth reply nonchalantly recommended buying a couple hundred views as a kickstarter. “On my last video I only bought 500 views to start and YouTube sent me 2000 more,” said the second user in response. “I'm happy with the 2500 views because it was just my third video and my subscribers are growing one by one. If I used my own voice and had more videos, I think the results would be even better.”

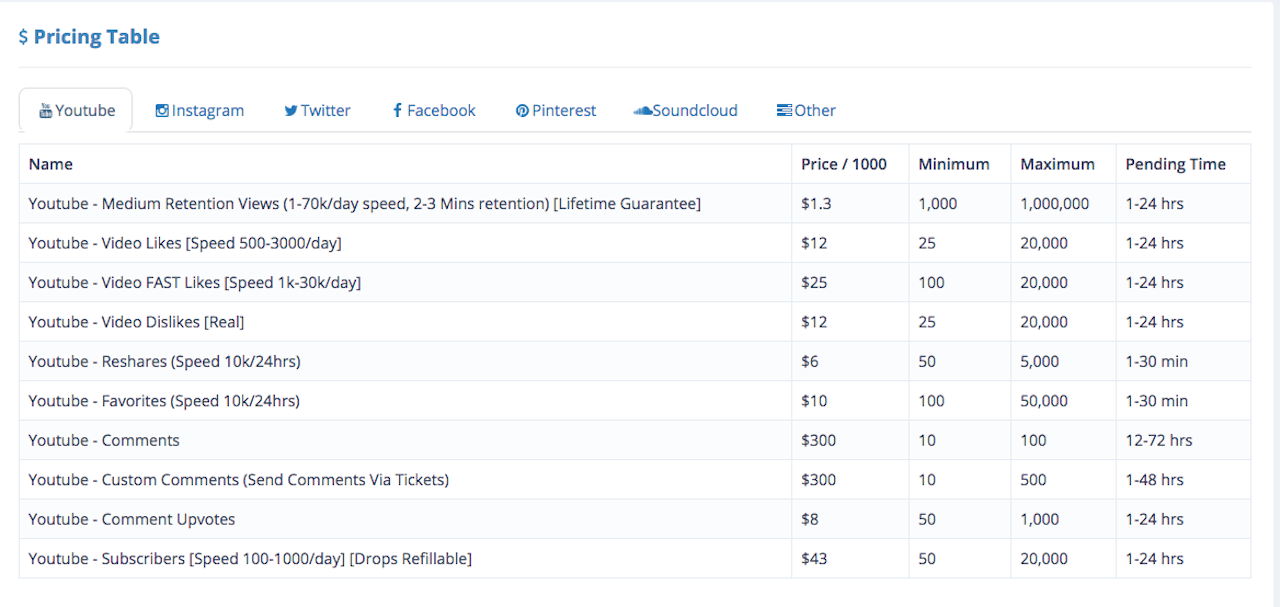

In the world of fake YouTube views, 500 is essentially nothing. Most purchases range from the tens of thousands well into the hundreds of thousands. And with prices at usually less than a dollar per 1000, buying in bulk makes sense in way.

The allure of monetization and virality has only made the practice more tempting. Views can be bought from Social Media Marketing (SMM) panels, digital vendors who provide traffic and/or engagement to clients on a variety of social media platforms through the use of automated (usually bot-driven) services. Most vendors either operate through forum postings and other decentralized pseudo-advertisements, or have a strangely candid website designed to promote their wares.

Take, for example, vendor “YoutubeSupply,” who was active from 2013-2017. Though it had a designated website (which is now offline), YouTubeSupply’s BlackHatWorld post was obviously popular, garnering roughly 115 pages worth of reviews and comments. In addition to functioning as a form of advertising, posts such as these seem to exist in order to soothe the worries of first-time customers by giving the vendor a semi-legitimate seeming front.

Obviously, YouTube is far from a fan of this. The company’s been engaged in a game of whack-a-mole with viewbotters for years. The cycle is always the same: YouTube changes its algorithm, viewbotters suffer. Eventually a select few figure out a workaround and business returns to normal. Repeat ad-nauseum.

The company’s harshest crackdown came a little bit over a month ago when YouTube announced that it would no longer be taking a conservative approach to the policing of third-party (read:illegitimate) views. Instead, it adopted a guilty-until-proven-innocent approach, which essentially freezes the view counts of all videos suspected of viewbotting until an audit can be conducted.

Unsurprisingly, this devastated the viewbotting community. In the days following the update, SMM panel after SMM panel went offline, leading to a noticeable community-wide freakout. in a thread comically titled “The current state of YouTube views (February 2018),” a number of BlackHatWorld users commiserated.

“Not so long ago a massive disruptive update was implemented inside the Youtube views algorithm,” wrote one commenter. “And like dominoes, each big and small player in the SMM niche fell like dominoes, cancelling practically every single views orders that was put into their panels. From my understanding, some highly known methods were patched, and now the only few methods left are what some providers are calling ‘real views’ , ‘slow as hell views’, which cost a ton of $$$ to produce.”

Most of these panels claim that, despite all appearances, the views they’re selling are “real” (whatever that means). Popular sites like QTube and Social Media Garden offer little information to backup these assertions. Their FAQs are sparse, and full of vague one-line answers like,“Our views come from one of our websites that we promote your video with,” and “Yes, our views all come from real people.” Similarly, vendors insist that buying views from them won’t put a user’s channel at risk, even though YouTube itself states otherwise.

"We take abuse of our systems, such as attempts to artificially inflate video viewcounts, very seriously, and take action against known abusers, including termination of their YouTube accounts," a YouTube spokesperson told The Outline. "YouTube continues to employ proprietary technology to prevent the artificial inflation of a video’s viewcount by spam bots, malware and other means. As part of our long-standing effort to keep YouTube authentic, we periodically audit the views a video has received and validate the video’s view count, removing fraudulent views as new evidence comes to light."

Despite this harsh language, few channels have been explicitly taken down for viewbotting. More often than not, the fake views are simply removed (or not counted to begin with), and life goes on. Since viewbotting is essentially impossible to prove from the outside (unless you happen to accidentally post a image of the “Buy Views” tab to your public Instagram story), it often remains a mere accusation. The only thing that’s definitely being hurt by all of this is YouTube’s image (not that that was exactly pristine to begin with, especially in the last few months). The company has been embroiled in scandal after scandal — from Elsagate to crisis actors conspiracies to wanton moderators — all of which seem to point to the same conclusion: YouTube doesn’t have as much control over its platform as we thought. And it’s far from a new problem.