Earlier this week, we wrote about how Google can highlight erroneous or unconfirmed reports in the immediate aftermath of breaking news. But these rapidly-shifting results are quickly lost in time as the search engine’s algorithms self-correct, making it difficult for outsiders — including journalists — to hold the search engine accountable for spreading potentially harmful information.

There is one group working on a concept for a system that would establish a record of search engine results. The idea is similar to the Internet Archive, which downloads periodic copies of websites, but more complicated since search engines display different results depending on the time as well as the location and history of the user. The solution for tracking such a complicated system is described in a prospectus for the Sunlight Society, founded by a group of 20 researchers under the banner of the American Institute for Behavioral Research and Technology (AIBRT), a nonprofit in Vista, California that conducts research in psychology and tech.

The concept is similar to Nielsen Media Research’s longstanding system that collects information about audience size and demographics of television viewers through meters installed in households around the country. But instead of monitoring TV habits of real people, the system would monitor their internet use. This would require a worldwide network of paid collaborators who would provide the Sunlight Society with access to their search results.

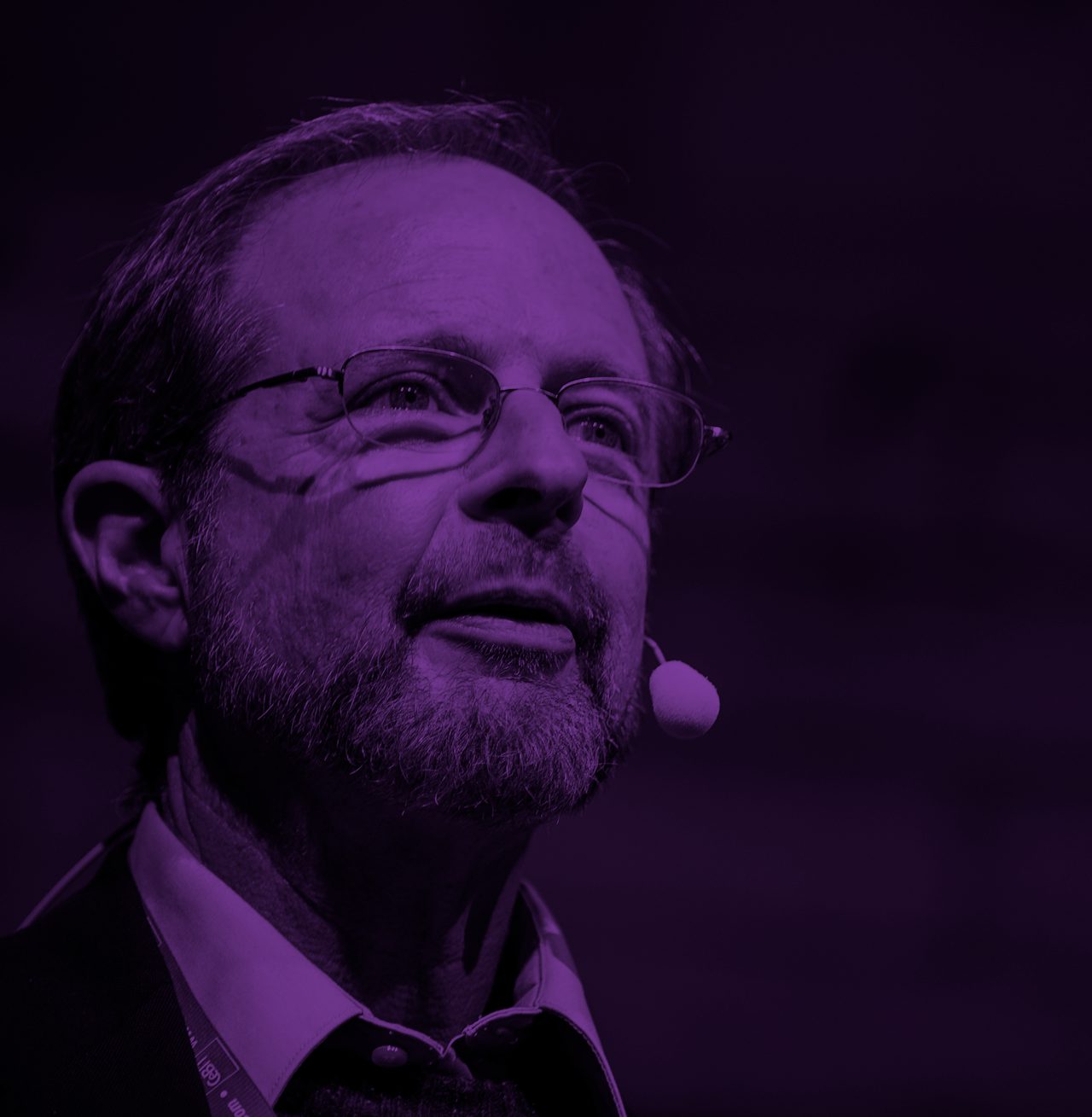

“This is about new methods of influence that have never existed before, and that are affecting the decisions of billions of people every day without their knowledge, and without leaving a paper trail,” said Robert Epstein, a 64-year-old researcher, book author, and former editor-in-chief of Psychology Today.

In one set of experiments published in the Proceedings of the National Academy of Sciences, Epstein and a collaborator had thousands of undecided voters in the United States and India, in person and over Mechanical Turk, use a mock search engine called Kadoodle. Kadoodle tested whether displaying results that the researchers determined to be biased in favor of a candidate higher up in the rankings could have an impact on stated voting intention. The researchers concluded that it would be “relatively easy” to boost a candidate by at least 20 percent and the duo coined a new term: the “search engine manipulation effect.”

In another project, which was published as a white paper on AIBRT’s website, Epstein recruited a network of anonymous anonymous confidants who provided access to their search histories during the runup to the 2016 presidential election, comprising more than 13,000 election-related searches. Overall, he says, results that were “biased in Mrs. Clinton’s favor” tended to float to the top of the list, a claim that picked up mainstream coverage (though the paper does not describe how the researchers decided a result was “positive”). Though he told me he doesn’t believe Silicon Valley executives are actively altering search results to influence elections, Epstein worries that they don’t appreciate the power their platforms hold over the electorate.

“We need to see, and capture, record, what the algorithms are showing people,” Epstein said. Even if Google and other gatekeepers published their code, he said, that wouldn’t be enough to demonstrate the different results that different users receive for the same query at different moments in time. “Looking at the actual code is useless. We need to see what people are seeing on their screens.”

The idea of a network of search engine monitors is compelling.

Epstein’s findings about the 2016 election have not been peer-reviewed, and Google called them “nothing more than a poorly constructed conspiracy theory.” Epstein also has a peculiar history with Google. In 2012, the search giant started displaying a warning that his website had been compromised by malware. Epstein couldn’t find a virus, and he fired off an angry email copied to Larry Page, a Google attorney, his congressman and journalists at the New York Times, The Washington Post, and Wired. It later turned out that his site had in fact been infected, though Epstein claimed the danger to users was minimal. He also sometimes drops hints of a conspiratorial worldview. He refuses to communicate over Gmail, since it’s owned by Google, and alludes to black-hat marketers and cash payments that, he said, were involved in the formation of the Sunlight Society.

Despite these caveats, the idea of a network of search engine monitors is compelling. Search engines like Google and other big tech companies like Facebook control what information regular citizens receive and how it’s packaged. This information is ephemeral, highly personalized, and controlled in part by machine learning, all factors that make it harder to understand what impact these systems have on culture and democracy.

“We are looking at the power that these algorithms have to shift opinions, and there’s never been anything like this in human history,” Epstein told me. In the future, he hopes that a network of human monitors watching what unfolds on the web could “track manipulative online content (like fake news stories) as it is proliferating,” he said in an email. In an era when online hoaxes spread like gossip, taking in celebrities, politicians, and journalists, an independent watchdog doesn’t seem like a bad idea. We now know that the Russian government propagated fake news stories. What we don’t know is how widespread that effort was, or how much it affected people. Maybe an archive like Epstein’s could help.

In March, 12 researchers at institutions including Stanford University, the University of Maryland, and the University of Amsterdam pledged support for Epstein’s work. The Sunlight Society’s founding members include computer science, engineering, and law faculty at Stanford, Princeton, and King’s College. Epstein said the system was unlikely to deploy before the second half of 2018.

“What I’ve realized is that the deliberateness, the malice, is a small problem compared to the negligence,” Epstein said. “Even if you’re hands off, your algorithms are going to be making decisions all the time about filtering and ordering. I actually find that more disturbing — the idea that elections around the world are being determined by algorithms.”

Clarification: This article has been updated to clarify the effect Epstein found in a study of undecided voters in the U.S. and India.